Euclidean neural networks

What is e3nn?

e3nn is a python library based on pytorch to create equivariant neural networks for the group \(O(3)\).

Where to start?

Guide to the

e3nn.o3.Irreps: Irreducible representationsGuide to implement a Convolution

The simplest example to start with is Tetris Polynomial Example.

Guide to implement a Transformer

Demonstration

All the functions to manipulate rotations (rotation matrices, Euler angles, quaternions, convertions, …) can be found here Parametrization of Rotations.

The irreducible representations of \(O(3)\) (more info at Irreps) are represented by the class e3nn.o3.Irrep.

The direct sum of multiple irrep is described by an object e3nn.o3.Irreps.

If two tensors \(x\) and \(y\) transforms as \(D_x = 2 \times 1_o\) (two vectors) and \(D_y = 0_e + 1_e\) (a scalar and a pseudovector) respectively, where the indices \(e\) and \(o\) stand for even and odd – the representation of parity,

import torch

from e3nn import o3

irreps_x = o3.Irreps('2x1o')

irreps_y = o3.Irreps('0e + 1e')

x = irreps_x.randn(-1)

y = irreps_y.randn(-1)

irreps_x.dim, irreps_y.dim

(6, 4)

their outer product is a \(6 \times 4\) matrix of two indices \(A_{ij} = x_i y_j\).

A = torch.einsum('i,j', x, y)

A

tensor([[ 0.2524, -0.2548, 1.2532, 0.0801],

[ 0.6410, -0.6470, 3.1825, 0.2033],

[-0.5576, 0.5629, -2.7686, -0.1769],

[ 0.3426, -0.3458, 1.7011, 0.1087],

[ 0.2690, -0.2715, 1.3356, 0.0853],

[-0.3854, 0.3890, -1.9136, -0.1222]])

If a rotation is applied to the system, this matrix will transform with the representation \(D_x \otimes D_y\) (the tensor product representation).

Which can be represented by

R = o3.rand_matrix()

D_x = irreps_x.D_from_matrix(R)

D_y = irreps_y.D_from_matrix(R)

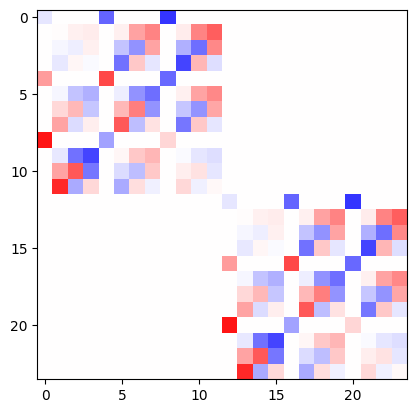

plt.imshow(torch.kron(D_x, D_y), cmap='bwr', vmin=-1, vmax=1);

This representation is not irreducible (is reducible). It can be decomposed into irreps by a change of basis. The outerproduct followed by the change of basis is done by the class e3nn.o3.FullTensorProduct.

tp = o3.FullTensorProduct(irreps_x, irreps_y)

print(tp)

tp(x, y)

FullTensorProduct(2x1o x 1x0e+1x1e -> 2x0o+4x1o+2x2o | 8 paths | 0 weights)

tensor([ 1.5882, 0.5008, 0.2524, 0.6410, -0.5576, 0.3426, 0.2690, -0.3854,

2.1014, 0.3414, 1.3436, 1.4135, 0.1983, 1.3948, 0.4546, 0.4286,

2.7747, -1.8139, 0.0551, 0.3519, 1.0108, 1.2816, -1.2928, 0.1581])

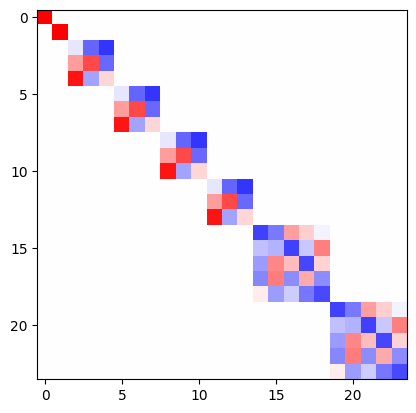

As a sanity check, we can verify that the representation of the tensor prodcut is block diagonal and of the same dimension.

D = tp.irreps_out.D_from_matrix(R)

plt.imshow(D, cmap='bwr', vmin=-1, vmax=1);

e3nn.o3.FullTensorProduct is a special case of e3nn.o3.TensorProduct, other ones like e3nn.o3.FullyConnectedTensorProduct can contained weights what can be learned, very useful to create neural networks.